In this decision, the European Patent Office did not grant a patent on the concept of improving the quality of computer-generated exams by utilizing empirical data of questions from previous exams. Here are the practical takeaways of the decision T 2087/15 of 21.7.2020 of Technical Board of Appeal 3.4.03:

Key takeaways

The invention

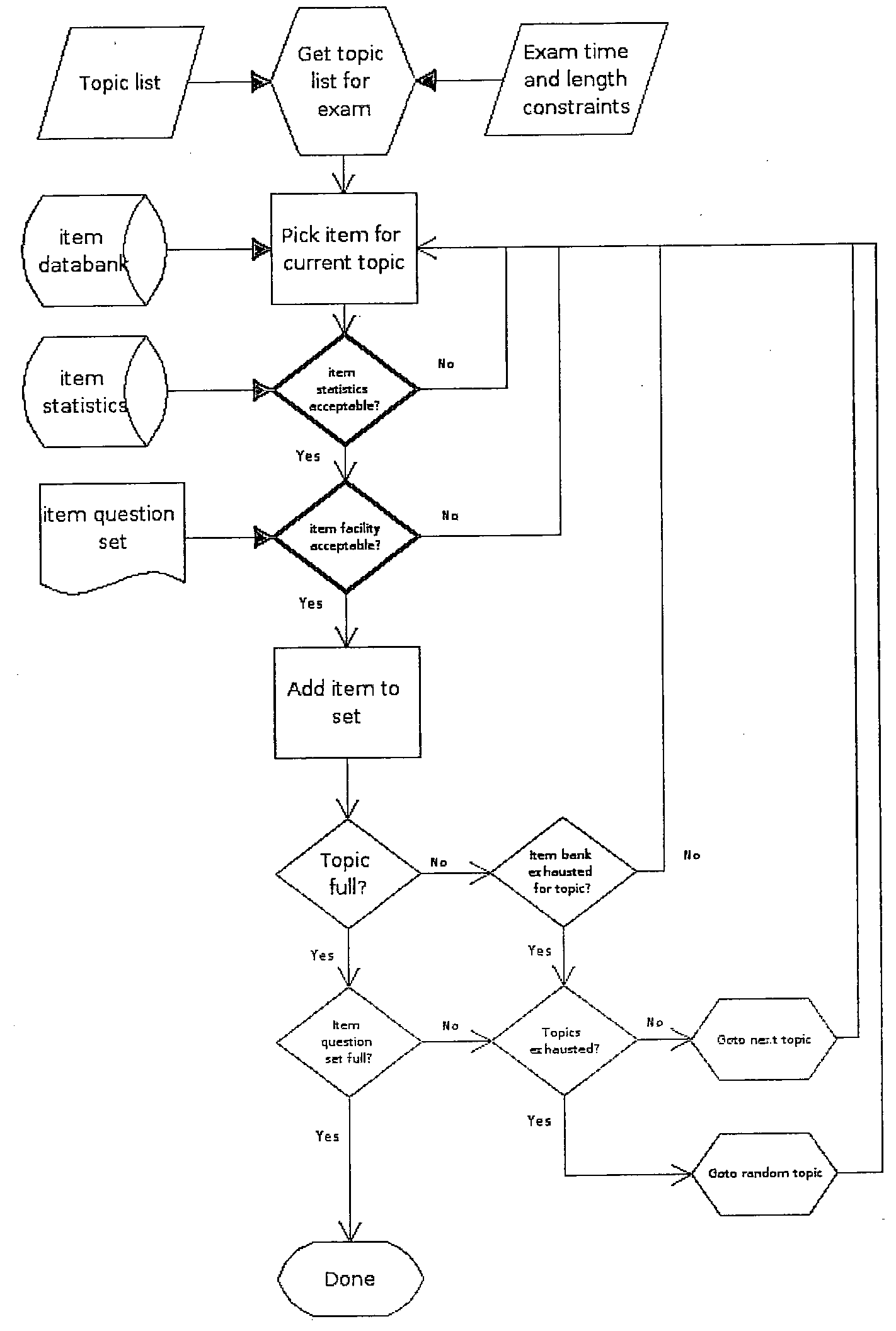

This European patent application relates to a method for improving the quality of computer-generated sets of examination questions utilizing empirical data of question items from previous exams.

The data are stored in a database of a data storage device and can include e.g. facility/difficulty, selectivity, distribution of answers, the correlation between the quality of the answers given to the question and the quality of the rest of the exam given by a person, the deviation in the responses given to the question, or other criteria. The method comprises a step of selecting a random question from the database and evaluating the selected question based on predetermined requirements for the possible inclusion in a question set (e.g. an exam or a questionnaire) for presentation to exam candidates. Questions found not fulfilling the requirements are discarded so that a question set of better quality is obtained. The steps of storing data, selecting, evaluating and possibly discarding question items are performed by computer-based means.

Here is how the invention is defined in claim 1:

-

Claim 1 (sole request)

Is it technical?

The first-instance examining division had refused the patent application based on lack of inventive step.

The board of appeal based its analysis on independent method claim 8, which was broader than system claim 1, because claim 8 lacked the step of indicating “a quality of the new question set by comparing an actual reliability of the new question set in use to a calculated reliability, assuming …”. According to the board, the aim of the present invention as defined in claim 8 was to generate exams, questionnaires or similar skill-level tests administered in large numbers, by:

a) randomly selecting a question within one or more predetermined topics from a number of existing questions in at least one topic, each question of said number of existing questions being associated with a data set related to answers given to the questions in previous exams,

b) evaluating the selected questions relative to predetermined requirements related to a measure based on facility, selectivity and distribution corresponding to said data set for possible inclusion in a question set for presentation to exam candidates and

c) discarding questions not fulfilling said requirements thereby generating a question set for presentation to exam candidates.

Based on the established COMVIK approach, the board assessed whether these features contributed to the solution of a technical problem, but found that no technical contribution was present:

The Board is of the opinion that steps a) to c) are well-known tasks of people preparing exams (e.g. teachers) who for centuries have performed this task mentally or on paper. A school teacher or an instructor preparing an exam e.g. for driving test candidates or for pilots selects question items from a pool of existing questions used in previous tests and evaluates these items with respect to their facility (i.e. how well or badly the question was answered in previous exams), selectivity (i.e. how representative the question is for the ability the exam aims to test) or distribution (i.e. how the scores in previous exams were distributed among the different candidates). If they find the selected and evaluated question unsuitable for the test (e.g. because it was too easy or too difficult for the average candidate in previous tests or because it revealed being erroneous or unclear), they will discard this particular question and not include it into the test they are preparing. However, if they find the question item suitable for the test, they will consider its inclusion in the test.

It follows that steps a) to c) for adapting questions to the level of students are not related to solving a technical problem, but to the non-technical task of a test writer (e.g. a teacher).

Even when characterised quantitatively, the effects underlying the claimed selection criteria are non-technical effects, merely possibly taking place in the minds of students facing easy or difficult questions, as they might face a test which corresponds more or less to their individual intellectual abilities. The same effects are expected to be achieved by the question sets resulting from the above mentioned steps a) to c).

Also the arguments put forward by the appellant did not convince the board. Firstly, the appellant argued that taking into account the correlation with answers to other questions improves the quality of each question:

The Board is of the opinion that the appellant’s argument that the invention took into account the correlation with answers to other questions to improve the quality of each question – see statement of grounds of appeal, page 1, third and fourth paragraphs – is not relevant, because in the method according to claim 8 a step of evaluating the “correlation” of a given question item with answers to other questions is not a part of the claimed method. This appears to be confirmed by the appellant’s statement in the annex to the letter dated 21 April 2020, page 9, first paragraph.

Even if the Board were to accept that the invention took into account the correlation with answers to other questions to improve the quality of each question, the effect of this feature is not of technical nature, but would only improve a teaching method or competence screening, which is equivalent to an improvement of a non-technical activities, essentially being based on mental acts.

The Board did also not share the appellant’s view that the present invention would work without human interaction so that it does not necessitate that any part would be performed in the mind of a student:

The method according to claim 8 uses a data base comprising data sets “related to answers given to the questions in previous exams” and aims at “generating a question set for presentation to exam candidates”. A skilled person understands that the term “previous exams” does not relate to exam performed by a computer, but by human candidates so that the data sets are the result of human interaction, namely the performance of human test candidates in past exams. Moreover, the skilled person understands that the questions sets generated are to be presented to human exam candidates so that it can reasonably be argued that those effects provided by steps a) to c) which are actually sought to be achieved by the invention and in the end might provide an objectively measurable difference over prior art question sets – i.e. that the question sets generated perform better than previous question sets – might possibly take place in the minds of these candidates.

The appellant further argued that the invention “consistently” improved the quality of a question data base, and hence the quality of questionnaires produced from that in the case of multiple choice question (MCQ) exams. But also this argument did not succeed:

The Board is not convinced by these arguments, because claim 8 neither comprises any means for improving the quality of the database nor is it limited to MCQ exams. However, even if the Board were to accept that the quality of the questions in a data base would improve, this cannot be qualified as a technical effect. The Board is of the opinion that steps a) to c) are performed by a person preparing an exam with questions to be presented to candidates. These type of exams existed well before the priority date of the present application, e.g. for the Chinese imperial examinations (606 – 1905). Hence, the Board agrees with the appellant that the claimed method does not change an individual question (i.e. does not improve its quality), but aim at providing an improved set of questions for an exam.

Lastly, the appellant stated that the technical character of the invention would be related both to the objectivity and efficiency of the system as well as the fact that a manual operation handled by humans simply is not possible. The question sets were produced as a data selection resulting from statistical analysis of the registered responses to the questions. The appellant also argued that the invention was completely separated from the subjectivity and illusiveness of what goes on in the mind of students and teachers:

The Board is not convinced by these arguments, because claim 8 is not limited to a “large amount of input” difficult to handle by a human operator (e.g. a teacher). It might be correct that a test writer would have difficulties to mentally handle fifty thousand questions. In the Board’s view there would be no problem to handle an ensemble of e.g. fifty questions known to the test writer and used in previous tests. As claim 8 does not specify the number of questions from previous exams in the data base, the method involving steps a) to c) on a computer is not necessarily more efficient. Furthermore, if the decision of including or discarding is based e.g. on the difficulty of the selected question (i.e. the number of correct answers compared to the number of total answers), it is not plausible that the method implemented on a computer would be necessarily more objective (or less subjective) than the same method performed by a human. Moreover, claim 8 does neither exclude any additional “manual operation” by a human being, nor does it require any statistical analysis, “sound mathematics and statistical procedures” or “special statistical arrangements” as argued in the statement annexed to the letter dated 21 April 2020. In other words, the Board is not convinced that the claimed method is necessarily performed more efficiently and/or less subjectively by computer-based means, compared to a human test writer. Finally, the Board cannot agree that the steps a) to c) could not be a part of what the appellant calls “teaching sphere”, because the function of exams e.g. provided by a teacher to his students is to evaluate if his teaching was successful. Hence, it cannot be said that steps a) to c) are outside the “teaching sphere”.

Therefore, the Board shared the view of the examining division that the technical features of claim 8 are not more than a computer having a data storage device (i.e. a memory) for storing a data base and a processor for implementing a method involving non-technical method steps a), b) and c) on this computer. Thus, the sole technical problem derivable from the wording of claim 8 was the proper configuration and programming of known technical means (i.e. a computer having a data storage device) in order to implement non-technical (teaching) constraints, i.e. the method steps a) to c).

The application itself did not provide any specific way of how this objective technical problem is to be solved, but merely stated that “all of these may be programmed into a computer or a computer network using general programming tools”. Therefore, the Board took the view that a skilled person (i.e. a computer specialist) being provided with the above requirement specifications, i.e. steps a) to c), would implement them in a straightforward manner as part of his daily routine, that is to say without making an inventive step.

In the end, the claims were not found to involve a non-obvious technical contribution, and thus the appeal was dismissed.

More information

You can read the whole decision here: decision T 2087/15 of 21.7.2020