The European Patent Office granted a software patent on a method for improving image classification by training a semantic classifier. Here are the practical takeaways of the decision T 1286/09 (Image classifier/INTELLECTUAL VENTURES) of 11.6.2015 of Technical Board of Appeal 3.5.07:

Key takeaways

The invention

This European patent application relates to the field of digital image processing, and in particular to a method for improving image classification by training a semantic classifier with a set of exemplar colour images, which represent “recomposed versions” of an exemplar image, in order to increase the diversity of training exemplars.

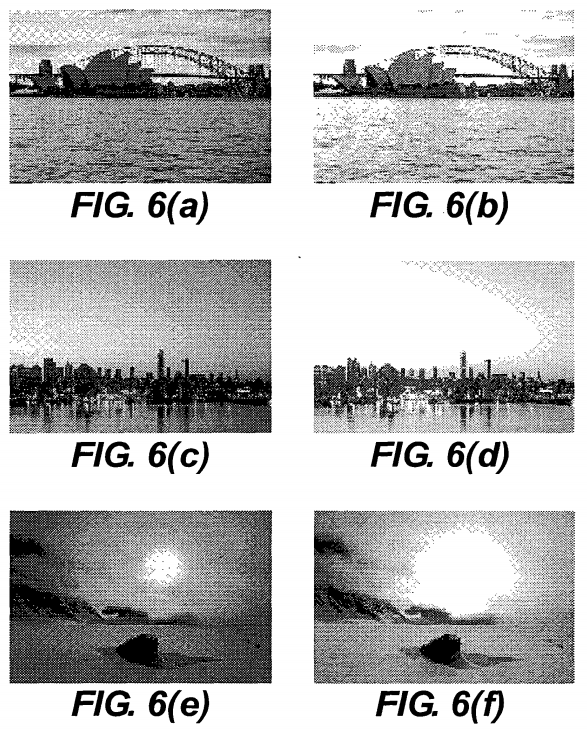

According to the application, known scene classification systems enjoy limited success on unconstrained image sets because of the incredible variety of images within most semantic classes. Exemplar-based systems should account for such variation in their training sets. However, even hundreds of exemplar images do not necessarily capture all the variability inherent in some classes. As an example of such variability, the application gives the class of sunset images which can be captured at various stages of the sunset and thus may have more or less brilliant colours and show the sun at different positions with respect to the horizon.

The gist of the invention was essentially to increase the diversity of exemplar images used to train a semantic classifier by systematically altering an exemplar colour image to generate an expanded set of images with the same salient characteristics as the initial exemplar image. More specifically, an exemplar image may be altered by means of “spatial recomposition”, i.e. by cropping its edges or by horizontally mirroring it. Another technique for expanding the set of exemplar images is to shift the colour distribution or to change the colour along the illuminant (i.e. red-blue) axis.

Here is how the invention is defined in claim 1 (main request):

-

Claim 1 (main request)

Is it technical?

In this case, the board did not even put into question whether training a colour image classifier was technical. Accordingly, the board “just” compared the claimed feature combination of the invention with the available prior art to identify whether an inventive step was justified:

Document D3 does not deal with the problem of training a colour image classifier, but with the problem of improving the recognitions of an original character represented by a set of degraded bi-level images. Furthermore, it discloses the processing of many (degraded) images of a character to provide an approximation of the original character, whereas the present application teaches processing an exemplar image to generate a set of exemplar images, such that the original exemplar image and the corresponding set of exemplar images share some salient characteristics of a certain semantic class of images.

Furthermore, also the use of image degradation models for the automatic training of image classifiers referred to in D3 (see page 2, “Background of the invention”), is not comparable to the present invention. In fact, the prior art acknowledged in D3 starts from a single ideal prototype image and processes it to generate a large number of pseudorandomly degraded images which train the classifier to recognise defective images of the same symbol (D3, page 2, lines 35 to 37). The present invention, however, starts from a real-world exemplar image and alters it “spatially” or “temporally”, so as to produce a set of images which simulate other possible “real-world images” in the same image category.

Hence, in the Board’s opinion, the teaching of document D3 cannot be regarded as a suitable starting point for the present invention.

Also the other available prior art did not disclose anything more relevant for the claimed invention:

As to the prior art documents D1 and D2 cited in the course of the examination, document D1 is concerned with the use of learning machines to discover knowledge from data. It relates therefore to a different field of technology and is not relevant to the present invention. Document D2 was cited by the Examining Division only as evidence that it was generally known to provide a digital representation of an image.

Therefore, the board concluded that claim 1 involved an inventive step, and remitted the case back to the department of first instance with the order to grant a patent on the basis of claim 1 according to the appellant’s main request.

More information

You can read the whole decision here: T 1286/09 (Image classifier/INTELLECTUAL VENTURES) of 11.6.2015